Wallarm Connector for Kong Ingress Controller¶

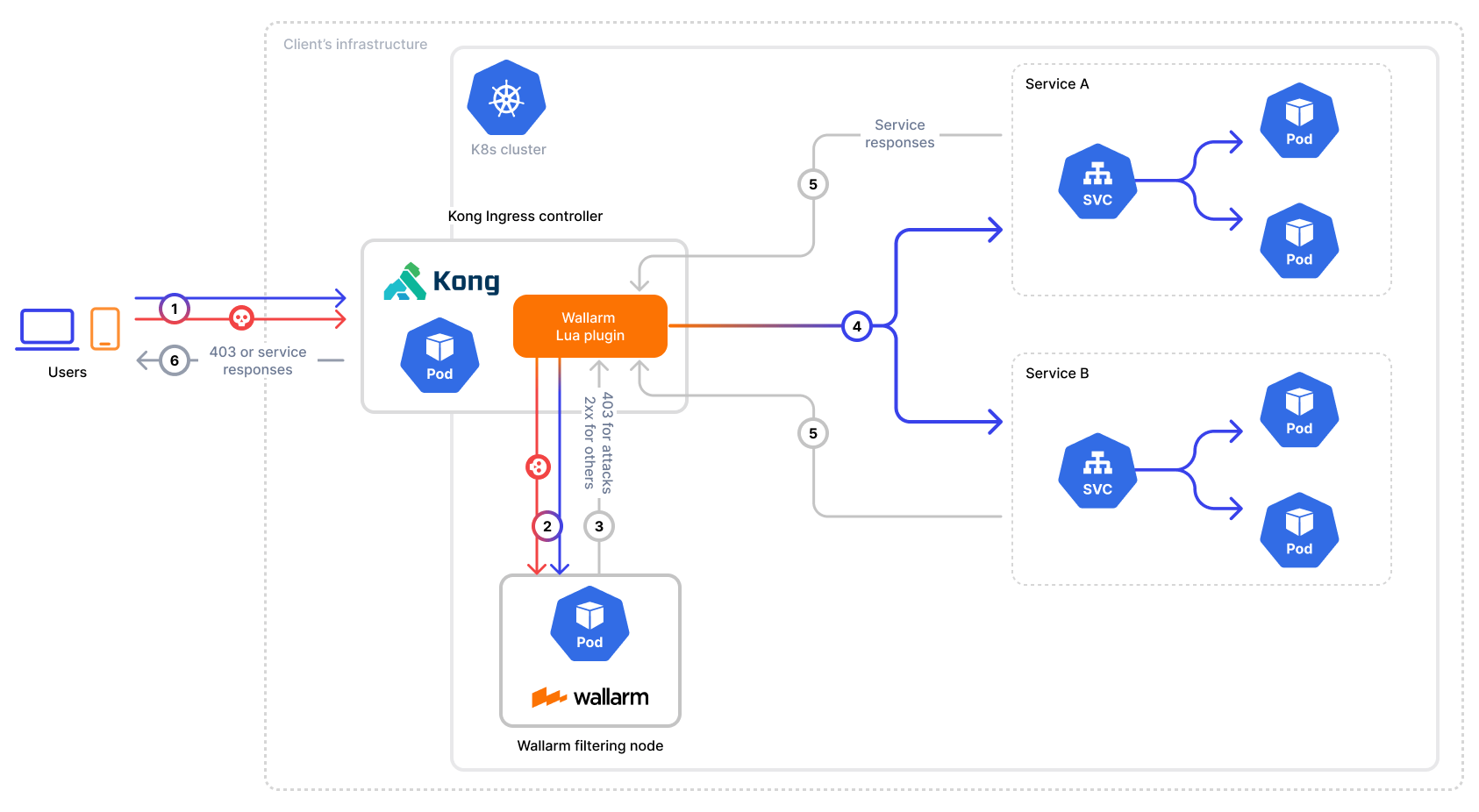

To secure APIs managed by Kong Ingress Controller, Wallarm provides a connector that integrates seamlessly into your Kubernetes environment. By deploying the Wallarm filtering node and connecting it to Kong via a custom Lua plugin, incoming traffic is analyzed in real-time, allowing Wallarm to mitigate malicious requests before they reach your services.

The Wallarm connector for Kong Ingress Controller supports only in-line mode:

Use cases¶

Among all supported Wallarm deployment options, this solution is the recommended one for securing APIs managed by the Kong Ingress Controller running the Kong API Gateway.

Limitations¶

This setup allows fine-tuning Wallarm only via the Wallarm Console UI. Some Wallarm features that require file-based configuration are not supported in this implementation, such as:

Requirements¶

To proceed with the deployment, ensure that you meet the following requirements:

-

Kong Ingress Controller deployed and managing your API traffic in Kubernetes cluster

-

Helm v3 package manager

-

Access to

https://us1.api.wallarm.com(US Wallarm Cloud) or tohttps://api.wallarm.com(EU Wallarm Cloud) -

Access to

https://charts.wallarm.comto add the Wallarm Helm chart -

Access to the Wallarm repositories on Docker Hub

https://hub.docker.com/r/wallarm -

Access to the IP addresses below for downloading updates to attack detection rules, as well as retrieving precise IPs for your allowlisted, denylisted, or graylisted countries, regions, or data centers

-

Administrator access to Wallarm Console for US Cloud or EU Cloud

Deployment¶

To secure APIs managed by Kong Ingress Controller, follow these steps:

-

Deploy the Wallarm filtering node service in your Kubernetes cluster.

-

Obtain and deploy the Wallarm Lua plugin to route incoming traffic from the Kong Ingress Controller to the Wallarm filtering node for analysis.

1. Deploy a Wallarm Native Node¶

To deploy the Wallarm node as a separate service in your Kubernetes cluster, follow the instructions.

2. Obtain and deploy the Wallarm Lua plugin¶

-

Contact support@wallarm.com to obtain the Wallarm Lua plugin code for your Kong Ingress Controller.

-

Create a ConfigMap with the plugin code:

<KONG_NS>is the namespace where your Kong Ingress Controller is deployed. -

Update your

values.yamlfile for Kong Ingress Controller to load the Wallarm Lua plugin: -

Update Kong Ingress Controller:

-

Activate the Wallarm Lua plugin by creating a

KongClusterPluginresource and specifying the Wallarm node service address:echo ' apiVersion: configuration.konghq.com/v1 kind: KongClusterPlugin metadata: name: kong-lua annotations: kubernetes.io/ingress.class: kong config: wallarm_node_address: "http://native-processing.wallarm-node.svc.cluster.local:5000" plugin: kong-lua ' | kubectl apply -f -wallarm-nodeis the namespace where the Wallarm node service is deployed. -

Add the following annotations to your Ingress or Gateway API route to enable the plugin for selected services:

Testing¶

To test the functionality of the deployed connector, follow these steps:

-

Verify that the Wallarm pods are up and running:

wallarm-nodeis the namespace where the Wallarm node service is deployed.Each pod status should be STATUS: Running or READY: N/N. For example:

-

Retrieve the Kong Gateway IP (which is usually configured as a

LoadBalancerservice): -

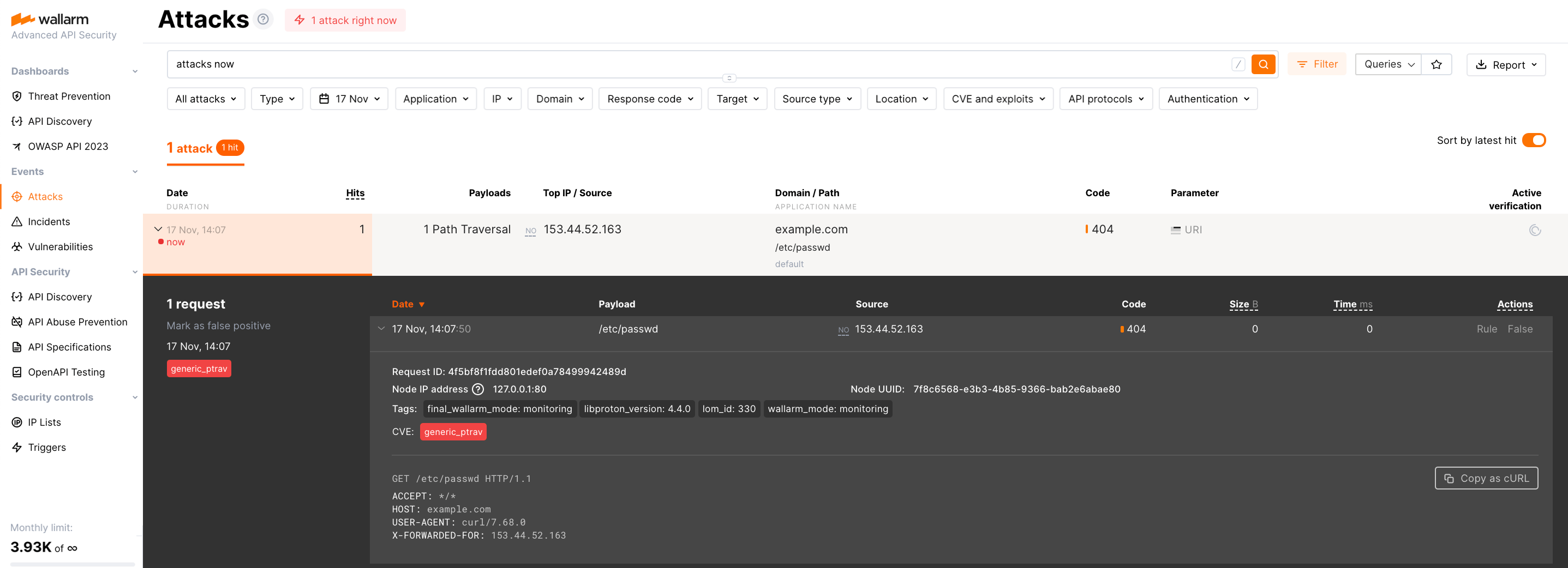

Send the request with the test Path Traversal attack to the balancer:

Since the node operates in the monitoring mode by default, the Wallarm node will not block the attack but will register it.

-

Open Wallarm Console → Attacks section in the US Cloud or EU Cloud and make sure the attack is displayed in the list.

Upgrading the Wallarm Lua plugin¶

To upgrade the deployed Wallarm Lua plugin to a newer version:

-

Contact support@wallarm.com to obtain the updated Wallarm Lua plugin code for your Kong Ingress Controller.

-

Update the ConfigMap with the plugin code:

<KONG_NS>is the namespace where your Kong Ingress Controller is deployed.

Plugin upgrades may require a Wallarm node upgrade, especially for major version updates. See the Wallarm Native Node changelog for release updates and upgrade instructions. Regular node updates are recommended to avoid deprecation and simplify future upgrades.